Are 4K UHD monitors worth it? - Part 2 of 4

Part 2 of 4 from 'Understanding 4k UHD'. HowToAV welcomes AV integration expert Neil Walton from CYP to discuss 4k UHD / Ultra High Definition video content.

Why have we gone with 4K UHD and not something bigger?

UHD has been chosen as a broadcast standard because it is a question of simple scaling. If you consider a 1080p image is actually a 1/4 of the size of the UHD image - UHD is twice as high and twice as wide as 1080p. 4K UHD means the TV's screen has a minimum resolution of 3,840 pixels wide and 2,160 pixels high.

What should we be looking at when we're looking at 4K screens?

Frame rate - the number of frames or images that are displayed per second. Frame rates are used in synchronizing audio and pictures, whether film, television, or video. Frame rate is important because the more pictures you take per second the more data you capture which means you need greater bandwidth to deliver that signal.

Colour Spacing & Chroma Subsampling - Using something called Chroma subsampling you can reduce the amount of data you send. chroma sub-sampling is a common practice to encode video files using less resolution for chroma (aka color, as opposed to luma aka light).

In order to save precious megabytes (or sometimes gigabytes), it has been figured out that as long as you have the luma value for each pixel, you can then share the colour value between every four. Then you lose 75% of the colour information, hense some degradation, but still achieving acceptable quality of recordings. This type of encoding is chroma sub-sampling.

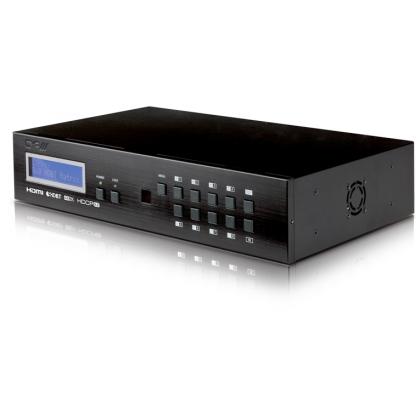

HDCP - the newest revision HDCP 2.2, protects 4K UHD content and - is a more secure protection protocol in order to protect all new content that the movie makers and TV production companies are currently investing in.

CONTINUE TO PART 3>>